How Design Systems Pay Dividends at Scale

A design system is not a component library. It's a decision record that encodes your brand's strategic constraints into every future design choice automatically. Most scaling companies have the former and mistake it for the latter — and the gap between the two is where brand coherence goes to die.

You've seen the evidence. Pull up your product, your marketing site, and your latest sales deck side by side. The typography doesn't quite match. The button treatments diverge. Spacing runs tighter in the product, looser on the marketing pages, and follows no discernible logic in the deck. Nobody made a mistake. The team grew from eight people to sixty, and the shared understanding of how things should look and feel stopped fitting inside a few people's heads. The design system you invested in six months ago exists in Figma — fully documented, officially launched. People just aren't using it consistently. Or worse, they are using it, and the output is still drifting. That drift isn't a cosmetic issue you can fix with a round of visual QA. It's a structural failure in what the system actually contains. Without the decisions and brand logic that produced those components, the system is a suggestion. And suggestions don't survive decentralised execution.

The Component Library Trap — Why Most Design Systems Fail Before They Start

What a Component Library Actually Gives You (and Where It Stops)

A component library is an inventory of outputs: visual elements stripped of the reasoning that produced them. It answers "what does this look like?" but never "why does it look this way?" or "when should I use this variant versus that one?" The distinction barely matters in a small team where shared context is implicit. The designer who built the components sits three desks away. Questions get answered in conversation. The library works as a shortcut to avoid rebuilding things from scratch, and that's genuinely useful. The trouble starts the moment implicit context becomes unreliable. Across the scale-ups we've worked with, the break point consistently lands between fifteen and twenty-five people — or more precisely, the moment the first designer joins who wasn't there when the library was created. That's the threshold where unspoken logic evaporates, and the library has nothing to offer in its place.

The pattern is predictable. A new designer needs to build a feature page. The library has a "Card" component with six variants. Nothing explains which variant fits which context, why the padding values are what they are, or what relationship the card's visual weight has to the broader information hierarchy. The designer picks the variant that looks right, detaches it to adjust spacing for their layout, and the drift begins. This isn't a discipline failure. It's an information architecture failure. The system stored the artifact but discarded the intelligence that made the artifact coherent.

Premature Abstraction — When Systematising Too Early Destroys Trust

Before we address what a design system should be, it's worth understanding the second way component-first thinking fails: systematising before you have the production evidence to know what the right abstractions are. A team builds a "Card" component with fourteen variants before they've shipped enough product to know which variants genuinely recur. Designers encounter components that don't quite fit their real use cases, learn to detach and customise, and the system loses credibility within weeks.

The damage here is deeper than wasted effort. Trust in a design system, once lost, is extraordinarily difficult to rebuild — and the mechanism is specific. Designers develop learned-bypass behaviour: a reflexive assumption that building from scratch will be faster and better than working within the system. That behaviour calcifies into team narrative ("the system doesn't really work for what we need"), and narrative persists long after the system improves because nobody re-tests an assumption they've already internalised as fact. You end up maintaining a system that your own team treats as optional, which is worse than having no system at all because you're paying the maintenance cost without receiving the coherence benefit.

The practical safeguard is what engineers call the rule of three: don't codify a pattern as a system component until it has appeared in at least three distinct, real production contexts. This ensures the abstraction reflects genuine recurring need rather than one designer's prediction. The implication for timing is significant. The right moment to invest heavily in a design system isn't a team-size threshold — it's a production-volume threshold. When you've shipped enough surfaces that genuine recurring patterns are observable across contexts, you have raw material for reliable abstraction. Before that point, a lightweight style guide and shared principles will serve you better than a premature component system.

The Decision Record — What a Design System Should Actually Be

A genuine design system is a decision record: a living repository of why choices were made, the constraints that shaped them, and the rules governing how elements combine. The components are artefacts of those decisions, not the system itself. When the primary CTA's weight, colour, and padding are accompanied by their rationale — this treatment exists because our positioning prioritises confident authority, our accessibility standards require AAA contrast at this size, and conversion testing confirmed a 14% lift over the previous treatment — the system becomes self-defending. Designers follow it because they understand the logic, not because a Confluence page told them to. Understanding scales. Enforcement doesn't. And the design system vs. component library distinction isn't semantic — it's the operational difference between a system that survives contact with a growing team and one that collapses under the weight of its own undocumented assumptions.

The Business Case for Design System Consistency

Most teams underestimate how quickly inconsistency compounds because they evaluate it as isolated incidents rather than a systemic dynamic. So let's run the actual numbers. Take a typical scale-up scenario: three products, four surfaces each (web app, marketing site, emails, sales collateral), twelve designers, twenty core components. That's 240 product-surface-component combinations. Without systematised constraints, assume each designer introduces an average of two custom variants per component per quarter — conservative for a team without decision documentation. Within six months, you're looking at roughly 480 undocumented variants. Each variant is a separate test case for QA, a separate code path for engineering, and a separate judgment call for every future designer who encounters it and has to determine whether it's intentional or accidental. The remediation cost to audit and reconcile those variants back into a coherent system typically runs four to six weeks of dedicated design and engineering time — time that produces zero new product value.

But the internal cost is the smaller problem. Inconsistency is a market signal, and this is where the design system ROI argument gets genuinely compelling. Users and buyers develop trust through pattern recognition. When the product feels one way, the marketing site feels another, and the onboarding email feels a third, the implicit message is that this company hasn't figured out who it is yet. For scale-ups competing against incumbents with decades of accumulated brand equity, coherence is one of the few accessible advantages. You can't outspend the incumbent on media. You can out-cohere them across every touchpoint. A consistent experience signals organisational maturity that belies your actual size — and that perception directly affects close rates, retention, and willingness to pay premium pricing. The design system is the mechanism that makes that coherence operational, which makes it a revenue instrument, not an overhead cost.

Brand Strategy Has to Enter the System — Not Sit Next to It

The Fatal Gap Between Guidelines and Components

The typical brand-to-product pipeline has a structural break that most organisations never notice until the damage is visible. A brand team produces guidelines — personality attributes, tone of voice, visual principles — often captured in a PDF or a set of Notion pages. A product design team builds components — buttons, forms, navigation patterns — captured in Figma and code. These two artefacts coexist in the organisation. They may even reference each other occasionally. But they are never mechanically connected. The guidelines say "confident and precise." The component library contains buttons and cards with zero embedded logic connecting them to "confident and precise." The translation from abstract brand attribute to concrete design constraint happens inside individual designers' heads — assuming they've read the guidelines, internalised them correctly, and remembered to apply them under deadline pressure. When brand strategy and visual identity exist as separate documents maintained by separate teams with separate review cycles, you don't have an integrated system. You have a gap papered over with good intentions.

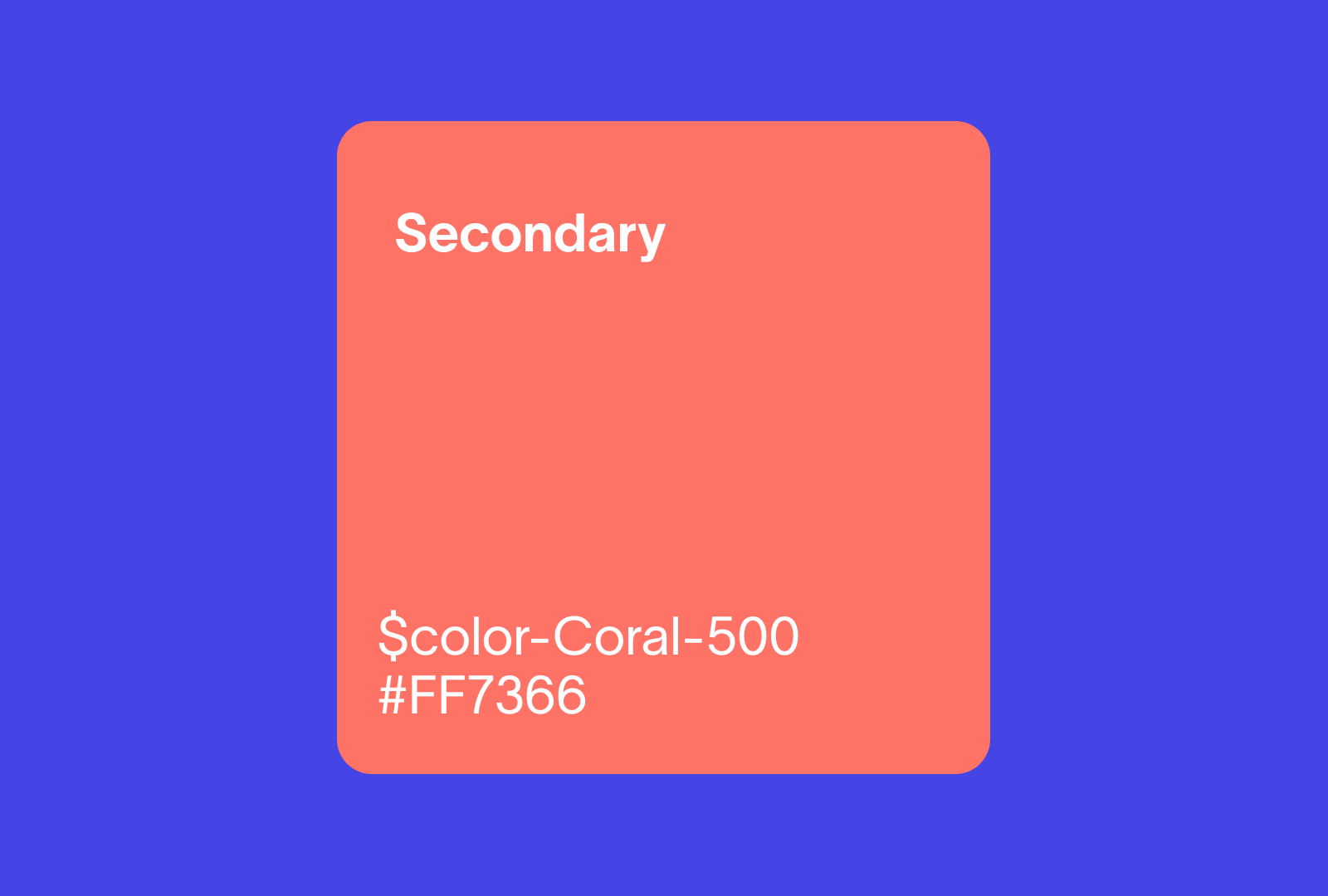

The Brand Encoding Matrix — Translating Strategy Into Constraints

The missing mechanism is a structured translation layer that converts each brand attribute into visual principles, system constraints, and behavioural rules. We call it a Brand Encoding Matrix, and its power becomes visible when you see how different brand attributes produce completely different but internally consistent constraint cascades through the same translation mechanism.

Take a brand attribute like "Precision." The visual principle that follows: tight grid adherence, minimal decoration, a mathematical spacing scale. The system constraint: a 4px base unit, no custom spacing values permitted, strict component padding rules. The behavioural rule: animations use eased cubic-bezier curves that resolve quickly — no playful bounces, no elastic overshoots. Every micro-interaction communicates control and exactness.

Now contrast that with "Warmth." The visual principle: generous whitespace, rounded corners, a humanist type family. The system constraint: a 8px base unit with permitted half-step exceptions for optical adjustment, border-radius minimums of 8px on interactive elements. The behavioural rule: transitions use slightly longer durations with gentle easing — elements arrive rather than snap. Every interaction communicates approachability and care.

Same translation mechanism. Completely different outputs. Each brand attribute generates a cascade of specific, testable constraints that flow directly into the design system. Without this translation layer, the system is brand-adjacent but not brand-encoded. The distinction matters because brand-adjacent systems drift without anyone recognising the drift as a brand problem. It just looks like "different design preferences" — and different preferences feel like reasonable creative expression rather than what they actually are: the slow dissolution of your positioning into visual noise.

The Brand Encoding Matrix makes strategy and design inseparable not as an abstract philosophy but as a specific, auditable set of translation rules. Strategy without design encoding is aspirational but unenforceable. Design encoding without strategy is consistent but arbitrary. The matrix connects them mechanically.

Once you've encoded brand logic into the system, the next question becomes urgent: who maintains and evolves that encoding as the organisation grows?

Governance — The Layer That Determines Whether Your System Lives or Dies

Choosing the Right Contribution Model

Governance is where design systems succeed or fail, and it's the layer most commentary skips because it's organisationally messy. Three models exist on a spectrum, and each has specific prerequisites. A centralised model — one dedicated team owns, builds, and maintains everything — delivers the highest consistency but creates a bottleneck. It works when the system is stable enough that product teams rarely need new components. It fails at growth-stage companies where product teams need to move faster than the central team can respond, which is nearly always. A federated model — product teams contribute components while a core systems team reviews and standardises — balances speed with consistency. It requires clear contribution guidelines, a reliable review cadence, and a core team with enough organisational authority to reject contributions that don't meet standards. This is the model that works for most scale-ups. A distributed model — anyone contributes, governance is peer-review-based — delivers the highest velocity but the lowest consistency guarantee. It only functions in organisations where design principles are so deeply internalised that peer review reliably catches drift. Adopting this model prematurely is one of the most common causes of system fragmentation.

The Adoption Flywheel

Adoption isn't a launch event. It's a self-reinforcing loop that spins in one of two directions. The positive flywheel: system quality generates designer and developer trust, which drives consistent usage, which reduces rework, which frees resources for system improvement, which raises quality further. The negative flywheel: low quality or incomplete coverage forces designers to encounter gaps, which produces workarounds and detached components, which creates visible inconsistency, which generates the narrative that "the system doesn't work," which reduces investment, which lowers quality further. Most organisations stuck in the negative flywheel misdiagnose the problem. They see symptoms — designers aren't following the system — and treat them as behaviour issues. The intervention point isn't enforcement. It's system quality and coverage. If your system is being routinely bypassed, the first question isn't "how do we enforce compliance?" It's "what gap in the system made bypassing it the rational choice?"

The Sanctioned Pressure Valve

Every system that survives long-term includes a formal deviation process. Campaign landing pages, product experiments, exploratory design concepts — these need space to exist outside the system's constraints without being treated as violations. Without a sanctioned pressure valve, two things happen: either the system becomes associated with rigidity and teams stop engaging with it entirely, or teams bypass it quietly and the official system diverges from actual practice. A functional deviation process requires four elements: a clear request mechanism, defined criteria for what qualifies as legitimate deviation, a review step to determine whether the deviation reveals a gap the system should absorb, and a sunset rule for experimental patterns that weren't promoted into the system. Defining the rules and the legitimate process for operating outside them is what separates a governance model from a policing model.

The Design System's Most Important Users Aren't Designers

At scale, the people making the most design-adjacent decisions are not your design team. They're product managers writing specs that imply layout choices, engineers making spacing calls during implementation, content writers structuring information hierarchy, and marketing teams assembling landing pages and emails. If the design system only functions when a trained designer reviews every output, it hasn't solved the scale problem — it's added a bottleneck dressed up as a process improvement. The system's job is to make off-brand output structurally difficult for everyone who touches brand-facing material, not by restricting autonomy but by encoding enough constraint that the default output is on-brand without requiring conscious design knowledge.

This is exactly where Webflow becomes a strategic advantage rather than just a CMS preference. A well-architected Webflow build is a design system implementation — global styles, component structures, CMS-driven templates, and class naming conventions that encode brand constraints directly into the editing environment. Marketing teams publish landing pages without a designer in the loop because the system structurally prevents off-brand output. The constraints are architectural, not instructional. A content editor can change copy, swap images, and adjust page structure, but they cannot break the spacing system, override the type scale, or produce a layout that violates the grid. The question of "who is this for?" determines everything about what a design system contains. Build it for designers and you get a Figma toolkit. Build it as a governance mechanism for the entire organisation and you get coherence at scale.

Design System Maturity: Where Does Your System Actually Sit?

Most scale-ups overestimate their system's maturity by at least one level. Here's how to locate yours honestly:

Level 0 — Freehand. No shared system. Every designer makes independent decisions. Output consistency depends entirely on how recently people have talked to each other. This is every company's starting point, and some stay here longer than they'd admit.

Level 1 — The Style Guide. A static document of visual choices — colours, fonts, logo usage — consulted occasionally, embedded in nothing. Better than freehand, but only barely, because it requires active remembering to have any effect.

Level 2 — The Component Library. Reusable UI elements in Figma and possibly code, covering the most common patterns. No documented decision logic, no governance process, no formal contribution model. This is where most scale-ups live, and where most scale-ups are stuck. The system feels real but doesn't actually prevent drift.

Level 3 — The Integrated Design System. Components plus decision documentation, design tokens, code implementation, and an active governance process with a defined owner. The system is embedded in the production workflow, not adjacent to it. This is the minimum viable level for any company that needs coherence across multiple products or teams.

Level 4 — The Living Brand System. Continuous contribution, brand strategy mechanically encoded through a framework like the Brand Encoding Matrix, sanctioned deviation processes, and a system that informs product and brand decisions rather than merely executing them. The system is an organisational asset, not a design team deliverable.

The jump from Level 2 to Level 3 is the hardest and most consequential transition — it's where governance, decision encoding, and brand integration enter the picture. If you're uncertain where you sit, here are three diagnostic questions that reliably reveal the truth:

These three questions will tell you whether you need incremental improvement or a structural rebuild. Be honest with the answers. Aspirational self-assessment is the single most common reason design system investments fail to produce returns.

Why a Stricter System Produces Better Creative Work

The common objection — "won't a rigid system kill creativity?" — gets the tradeoff backwards. The real question is whether your designers are spending their creative energy on decisions that benefit from creative variation or decisions that don't. At scale, the answer is damning. The average screen involves roughly forty micro-decisions — which grey, which spacing value, which corner radius, which type size for this context — that have correct answers rather than creative answers. When those decisions are systematised, designers stop spending cognitive cycles on them. The creative capacity freed up goes to the work that actually differentiates: layout strategy, narrative flow, interaction choreography, and solving genuinely novel problems.

We've seen this pattern play out concretely. One team we worked with resisted system adoption for months on creative-freedom grounds. After implementation, their designers reported that exploration time on new features increased by roughly 30% — not because they had more hours, but because they weren't rebuilding foundational patterns from scratch every sprint. The work got more creative, not less, because the system handled the commodity decisions and left humans to focus on the strategic ones.

The system also raises the floor for every piece of output the organisation produces. At scale, raising the floor matters more than raising the ceiling, because average output — not occasional exceptional work — determines aggregate brand perception. A customer doesn't experience your best landing page and your weakest email as separate events. They experience the average. A stricter system makes that average dramatically better by ensuring even the most routine, least-designer-touched output meets a baseline that reinforces rather than erodes your positioning.

Two Paths Forward

Path one: the internal audit. Use the maturity model and the three diagnostic questions above to honestly locate your current system. Share those questions with your design lead, your engineering lead, and your head of marketing in the same room. Where they disagree on the answers is where your system's real gaps live. These evaluations will tell you whether you need incremental improvement — better documentation, a governance owner, contribution guidelines — or a structural rebuild from strategy through to implementation.

Path two: close the strategy-to-production gap. For organisations where brand guidelines live in a PDF and components live in Figma and the two have never been mechanically connected, the integration problem requires more than better documentation. It requires a methodology that treats strategy, identity, system, and implementation as a unified pipeline rather than separate deliverables handed between separate teams. That's the specific problem Halo Fusion™ was designed to solve — not because integration is philosophically nice, but because every handoff between strategy and execution is a point where brand intent degrades.

The design system is the highest-leverage investment a scaling company can make in its brand. Not because it makes design faster, though it does. Not because it reduces engineering overhead, though it does that too. But because it makes brand coherence automatic — encoded into the tools and constraints that every team member works within, every day, without needing to remember or choose correctly. A design system built as a decision record, encoded with brand logic, and governed for evolution doesn't just survive growth. It compounds through it.